By adopting A.M. No. 25-11-28-SC, the Supreme Court has placed the Philippines to be among the first few nations to have established a formal domain specific governance framework that places artificial intelligence under the discipline of constitutional values, judicial ethics, human accountability, and the rule of law.

CLICK HERE FOR FULL COPY OF THE RESOLUTION: Governance Framework on the Use of Human-Centered Augmented Intelligence in the Judiciary

The Supreme Court of the Philippines has issued one of the most consequential public-sector AI governance measures yet seen in the country. Through A.M. No. 25-11-28-SC, adopted on February 18, 2026, the Court approved the Governance Framework on the Use of Human-Centered Augmented Intelligence in the Judiciary, a document that is both legally significant and institutionally ambitious, beyond just a ceremonial policy statement about innovation. It is a binding governance framework that tries to answer a harder question: how should a constitutional court permit the use of artificial intelligence in the administration of justice without weakening human judgment, due process, and public trust?

That question is no longer theoretical. Courts around the world are confronting the spread of AI-assisted transcription, legal research, drafting support, translation, document review, analytics, and public-facing digital services. At the same time, legal systems are also contending with AI-related risks that are especially dangerous in justice settings: hallucinated authorities, embedded bias, opaque decision-making, data leakage, automated overreliance, and the temptation to confuse speed with legitimacy.

The Philippine Supreme Court’s framework begins from that reality. It recognizes that AI can improve efficiency and expand access to justice, but it also warns that, without appropriate human oversight and control, AI can amplify bias, inequality, discrimination, misinformation, and other forms of harm. In that sense, the document is best understood not as a technology policy alone, but as a rule-of-law response to a structural shift in how legal work is being done.

Its importance lies first in its legal posture. The Court does not treat AI as an irresistible force to which institutions must simply adapt. Nor does it frame AI as inherently suspect and therefore unfit for judicial use. Instead, it adopts a middle course: AI may be used, but only on terms consistent with constitutional duty, ethical responsibility, and institutional control. The resolution expressly grounds itself in Article VIII, Section 5(5) of the Constitution, invoking the Supreme Court’s power to promulgate rules concerning constitutional rights, pleading, practice, procedure, admission to the practice of law, the Integrated Bar, and legal assistance to the underprivileged. That grounding matters. It establishes that AI governance in the justice sector is not merely managerial experimentation. It falls within the Court’s constitutional obligation to protect the integrity of adjudication and the administration of justice.

The framework’s language is also deliberate. It does not center “artificial intelligence” as an autonomous actor. It uses the phrase “human-centered augmented intelligence,” defined as AI developed, deployed, and used consistently with the framework’s ethical principles and management governance. That wording reflects a deeper legal and institutional choice. The Court is not conceding that machine outputs are substitutes for legal judgment. It is marking out a subordinate role for AI: a support technology that may enhance human work, but cannot supplant the human responsibility at the heart of judging, lawyering, and court administration. The distinction may seem semantic to some readers. In practice, it is foundational. It determines whether AI is understood as an instrument under law or as a quasi-authoritative system operating beside it.

The breadth of the framework is one of its strongest features. It applies not only to justices and judges, but also to court officials and employees, court users, and vendors or third-party organizations involved in the design, development, and use of AI tools on behalf of the Judiciary. That wide scope is not overreach. It reflects a realistic understanding of how AI risk enters institutional systems. Harm does not begin only when a judge sees an output. It can arise upstream in procurement, model design, training data, deployment decisions, security architecture, and submission practices by lawyers and litigants. By covering the full chain of actors, the Court avoids a common weakness in technology policy: focusing on end users while ignoring the infrastructures and incentives that shape the tool long before it reaches the courtroom.

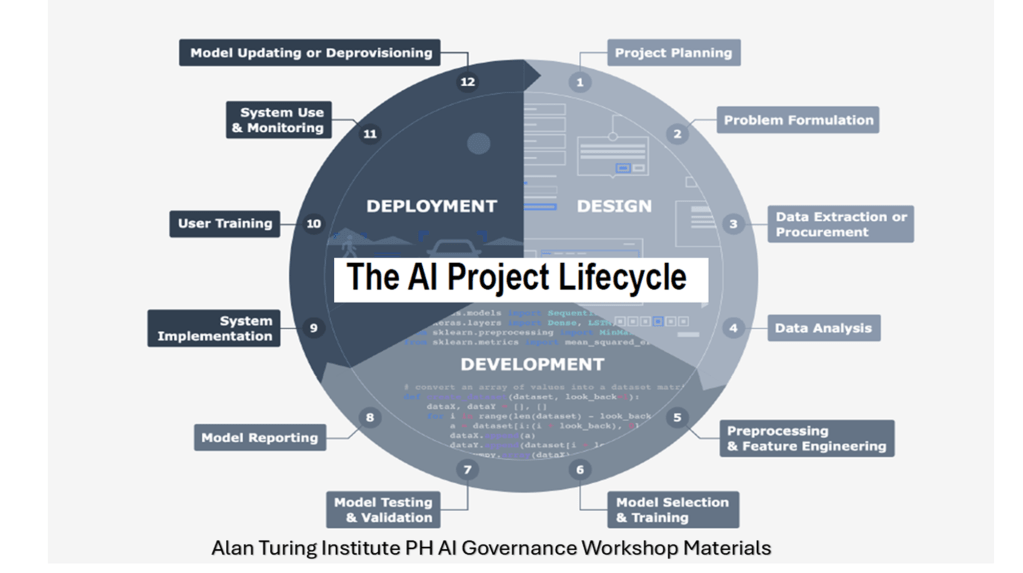

Substantively, the framework is organized around ethical governance and management governance. This is an important design choice. Many AI documents stop at general principles such as fairness, accountability, and transparency, leaving implementation vague. The Supreme Court’s framework does not. It enumerates ethical principles and then links them to governance structures, adoption rules, risk models, procurement requirements, and institutional roles. Even its own executive summary describes the framework as covering two forms of technology governance: first, the ethical principles to be addressed at every stage of the AI life cycle, and second, the operationalization of those principles for the specific needs of the Philippine Judiciary. In other words, the Court is trying to convert high-level values into administrative practice.

The first principle is human-centeredness. The framework states that AI use must maximize the technology’s potential to improve efficiency, accuracy, and human capability while minimizing identified and unanticipated risks and avoiding the amplification of existing harms. It anchors AI use in human values, including the rule of law, dignity, autonomy, privacy, data protection, fairness, non-discrimination, and social justice. It also warns against indiscriminate AI use that could degrade cognitive ability and critical thinking, and it insists that AI should primarily function as a support or augmentation tool rather than a mechanism that overrides human judgment. This is not generic ethics language. It is a direct institutional rejection of automation as an end in itself. The Court is saying that in justice work, human capacity must be strengthened, not displaced.

The second principle is respect for human rights and harm avoidance. Here the framework is especially strong. It states that all technology use in the Judiciary must be consistent with the Constitution and international human rights instruments to which the Philippines is a party. If the use of an AI tool has the effect of limiting human rights or fundamental freedoms, that use must have a legal basis and must not go beyond what is reasonable, proportional, necessary, and least intrusive. More significantly, the framework provides that if there is evidence that a tool presents equivalent or greater harm, or even a probability of such harm, in violation of rights or contrary to the rule of law, then it should not be used. That is a robust safeguard. It places rights and legality ahead of novelty, vendor promise, or administrative convenience.

The third principle, transparency, is treated not as a public-relations virtue but as a legal obligation. The Court requires AI use in the Judiciary to be understandable and intelligible to the public. It says the capabilities and risks of AI must not be obscured by technical complexity. It requires the Judiciary to inform the public about useful information concerning how AI tools are developed, trained, and deployed, subject to the limits imposed by confidentiality, judicial security, and legal ethics. It then goes further by prescribing minimum disclosures when AI is used in judicial processes: the tool and version used, the purpose of use, the extent of reliance on the tool or its output, the degree of human oversight, preservation of outputs for possible inquiry, compliance with the framework, and a statement that the user bears ultimate responsibility. This is unusually concrete. In procedural terms, it functions as a due-process-oriented record of AI involvement.

That transparency requirement becomes even more notable in adjudicatory settings. The framework mandates disclosure, in clear and plain language accessible to the parties, when members of the Judiciary or court personnel use AI tools in preparing court-issued documents for tasks such as voice-to-text transcription, translation, automated citation generation, legal research, summarization, optical character recognition, proofreading, and redaction or sanitization of data. The message is unmistakable: if AI touches adjudicatory work, the fact of that use cannot remain invisible. In a justice system, hidden automation can distort accountability. The Court is trying to prevent that by making AI use visible wherever it may affect the integrity of legal process.

The fourth principle—accountability, human oversight, and continuous monitoring—is arguably the constitutional heart of the framework. It states that human control must remain paramount in any use of AI and that AI must not replace human discernment. Users are personally responsible for the output a tool produces and for its consequences. All outputs must be reviewed and approved by human beings. Most critically, the framework draws a bright line around adjudication: under no circumstance should AI tools or their outputs serve as the sole, primary, or determinative basis of any adjudicatory outcome, and legal reasoning and final conclusions affecting the rights and duties of parties must be independently formed by the human decision-maker. It also forecloses a defense that will likely become more common in professional practice: judges, court employees, and lawyers may not evade responsibility for violations of law or ethics by claiming that the fault lay with an AI tool.

That non-delegation principle is what distinguishes serious judicial AI governance from mere administrative digitization. A court can automate scheduling, translation assistance, transcription support, and certain back-office functions without changing the nature of judging. But once machine outputs begin to drive legal conclusions, assess credibility, or determine the rights of parties, the institution risks hollowing out the human responsibility on which judicial legitimacy depends. The Philippine framework recognizes this risk explicitly. It permits augmentation but forbids abdication. In a period when some sectors are tempted to equate automated efficiency with institutional progress, that is a consequential legal line to draw.

The framework also addresses fairness and non-discrimination with welcome candor. It acknowledges that AI tools are made and used by human beings who are themselves prone to conscious and unconscious bias. It warns that training datasets may be incomplete, contextually inappropriate, or may overvalue or undervalue certain demographics. It also notes that AI can exacerbate existing harms against vulnerable, underrepresented, and marginalized populations. To guard against those possibilities, the development and use of AI in the Judiciary must be protected not only against overt discrimination but also against perpetuating old inequities or creating new ones. The Judiciary is further directed to promote capability-building against algorithmic bias, automation bias, and related cognitive fallacies. This is especially important in courts, where even small distortions in language, categorization, or inferred risk can have significant consequences for liberty, property, family relations, and access to remedies.

Privacy and data protection are given similarly serious treatment. The framework recognizes that the Judiciary processes large amounts of personal information, sensitive personal information, confidential data, and information protected for reasons of public safety and national security. It requires privacy and data protection to be built into AI use from design through deployment. Users must be aware of what data is entered into AI tools, how it is processed, and who has access to it. The framework’s data governance provisions also call for data classification, retention, access controls, sharing rules, data minimization, anonymization, monitoring, auditing, tagging, incident response, and breach procedures. It further requires ongoing coordination between the AI Committee and the Court’s Data Protection Officer on privacy impact assessments, complaints, and data-sharing arrangements. This reflects a clear understanding that in court systems, data misuse is not a secondary technical problem. It is a rights issue.

On security, safety, and robustness, the framework takes a preventive approach. It says a comprehensive risk assessment is a necessary prerequisite before any AI tool is implemented for any reason in any part of the Judiciary, and that this risk assessment must be explained in plain language to end users. The framework also requires resilience against attacks such as data poisoning and model leakage. Safety measures must be in place to minimize unintended consequences, and AI systems must be continuously classified according to risk. Predictive AI is treated as high risk because of the consequences of inaccurate or inadequate predictions. This is a prudent stance. Predictive systems in justice settings carry unusual dangers because they may appear objective even when they rely on proxies, weak correlations, or incomplete institutional data.

Notably, the Court adopts a risk-based structure that will be familiar to observers of international AI regulation. Its framework identifies prohibited AI systems, high-risk AI systems, limited-risk AI systems, and minimal-risk AI systems. Prohibited systems include those posing unacceptable risks to fundamental rights and human safety, such as AI for cognitive behavioral manipulation or real-time biometrics-based identification and tracking of people. High-risk systems are those that directly affect fundamental rights and human safety. Limited-risk systems include tools such as customer-service chatbots and transcription. Minimal-risk systems are those that pose little threat and may be addressed chiefly through disclosure obligations. This tiered approach matters because it signals that the Court is not regulating “AI” as a single category. It is regulating according to function, context, and harm.

The framework’s management governance sections are where its ambition becomes most evident. The Supreme Court En Banc retains oversight and supervisory authority over all AI-related policies and processes in the Judiciary, the Bench, and the Bar. It is also the body that approves, on a case-by-case basis, the allowable extent of AI use. No AI tool may be used unless authorized by the En Banc. That requirement may strike some readers as centralized, but it reflects the Court’s desire to avoid fragmented experimentation across judicial offices. In high-trust institutions, uncoordinated technology adoption can create inconsistent safeguards, uneven competence, and unseen risks. Central authorization is a mechanism for maintaining coherence.

The supporting institutional architecture is similarly detailed. The Management Information Systems Office is tasked to advise the Court on technical aspects of AI use. The Data Protection Officer is responsible for safeguarding data used in connection with AI. The Office of the Court Administrator must implement change management, capacity-building, and upskilling programs for first- and second-level courts. The Philippine Judicial Academy is directed to create training opportunities in AI and the law. Legal education institutions and the legal academe are encouraged to conduct research and offer courses in AI, ethics, applications, and regulation, including specific instruction on generative AI’s uses, limits, and risks. The Integrated Bar of the Philippines is expected to monitor the profession’s engagement with AI and expand continuing legal education on the topic. This is governance by institutions, not slogans.

To make that architecture durable, the framework establishes a permanent Committee on Human-Centered Augmented Intelligence. The Committee is an advisory body tasked to recommend policy guidelines, oversee development and deployment, supervise procurement from third-party providers, evaluate risks and ethical issues before deployment, develop incident and vulnerability reporting processes, recommend continuous risk-management measures, and conduct capacity-building. Its functions also extend to the legal profession, not just court administration. That breadth is sensible. The effects of AI on judicial integrity do not stop at the courthouse door; they also arise in law practice, court submissions, evidence handling, and professional responsibility.

The framework is especially careful about procurement and third-party tools. Developers of AI tools procured or intended to be procured by the Judiciary must disclose the tool’s logic, limitations, and safeguards. If a tool is wholly or partly owned, designed, or developed by a third party, that party must fully disclose the nature of the tool, the processes involved, the data models used, and other significant components. Claims of trade secrets or proprietary confidentiality must be justified if they would prevent or limit necessary disclosure. This is a critical provision. One of the central governance problems in public-sector AI is the opacity created by vendor systems that public institutions cannot meaningfully inspect. The Court is signaling that proprietary black boxes are not acceptable where judicial integrity is at stake.

The framework’s adoption of human-control models also shows its engagement with international governance debates. It refers to human-in-the-loop, human-on-the-loop, and human-in-command approaches, and it expressly notes that these mechanisms are to be applied depending on the degree of autonomy and risk associated with a tool. In substance, these models help translate the abstract requirement of human oversight into different operational settings: intervention at the output stage, monitoring during operation, and broader control over when and how a tool is used and how it interacts with external forces. In policy terms, this is significant because “human oversight” is often invoked loosely. The framework attempts to specify what human control should look like.

One of the most important aspects of the framework is its explicit international orientation. The Court states that it may adopt and localize principles and best practices from other jurisdictions and organizations. It specifically names the European Union AI Act; UNESCO’s Recommendation on the Ethics of Artificial Intelligence and its Guidelines for the Use of AI Systems in Courts and Tribunals; the Global Toolkit on AI and the Rule of Law for the Judiciary; the European Ethical Charter on AI in judicial systems; the European Commission High-Level Expert Group’s Ethics Guidelines for Trustworthy AI; the ASEAN Guide on AI Governance and Ethics; the Expanded ASEAN Guide on Generative AI; the Council of ASEAN Chief Justices’ framework for ASEAN judiciaries; the Council of Europe’s AI convention; and the OECD AI Principles. That list matters because it shows the Court situating its framework within the mainstream of emerging international AI governance while still localizing it for Philippine constitutional and institutional conditions.

The Philippine framework mirrors several of the central themes now found across major AI governance efforts: human-centeredness, risk-based regulation, accountability, transparency, auditability, traceability, data governance, continuous monitoring, and institutional capacity-building. Yet it also adds something important of its own. International documents often articulate broad norms that states or institutions are encouraged to adopt. The Supreme Court has taken those norms and converted them into enforceable judicial guardrails for a specific branch of government. In that sense, the document is best understood as a localization exercise with legal teeth. It translates global governance language into internal rules for a justice institution that cannot afford ambiguity about responsibility.

This matters beyond the Judiciary. For lawyers, the framework signals that AI-assisted work in research, drafting, submissions, and evidence handling will be judged against standards of competence, disclosure, and ethical accountability. For technology providers, it means that selling into the justice sector will require more than claims of convenience or productivity. It will require traceability, explainability, procurement disclosure, and respect for rights-based limits. For legal education, it confirms that AI literacy is no longer peripheral to the future of the profession. And for the public, it offers a crucial assurance: the Philippine Judiciary is not embracing AI on faith. It is insisting that modernization occur under law, not around it.

There are, of course, difficult questions ahead. Implementation will determine whether the framework becomes a living governance system or remains largely aspirational. The Court will need to issue detailed guidelines, build internal technical competence, maintain discipline in procurement, and ensure that disclosure and review practices are actually followed in day-to-day operations. It will also have to manage the tension between innovation and caution: move too slowly, and the institution may miss tools that can genuinely improve access and efficiency; move too quickly, and it risks embedding systems that are poorly tested or weakly understood. But the framework is notable precisely because it anticipates those governance challenges. It requires periodic review, continuous monitoring, incident reporting, risk assessment, data governance, and institutional learning.

In the end, A.M. No. 25-11-28-SC makes a larger constitutional point. In the administration of justice, AI may assist, but it may not govern. It may support research, transcription, translation, document management, and other functions, but it may not replace the independent formation of legal reasoning. It may help modernize the courts, but it cannot dilute human responsibility, judicial ethics, or due process. That is the framework’s central achievement. It refuses the false choice between technological relevance and constitutional fidelity. Instead, it insists that any modernization worthy of a court must proceed on human-centered, rights-respecting, and institutionally accountable terms.

For the Philippines, that is not only a prudent response to a fast-changing technology. It is a model of how public institutions should govern powerful systems before those systems quietly begin to govern them.

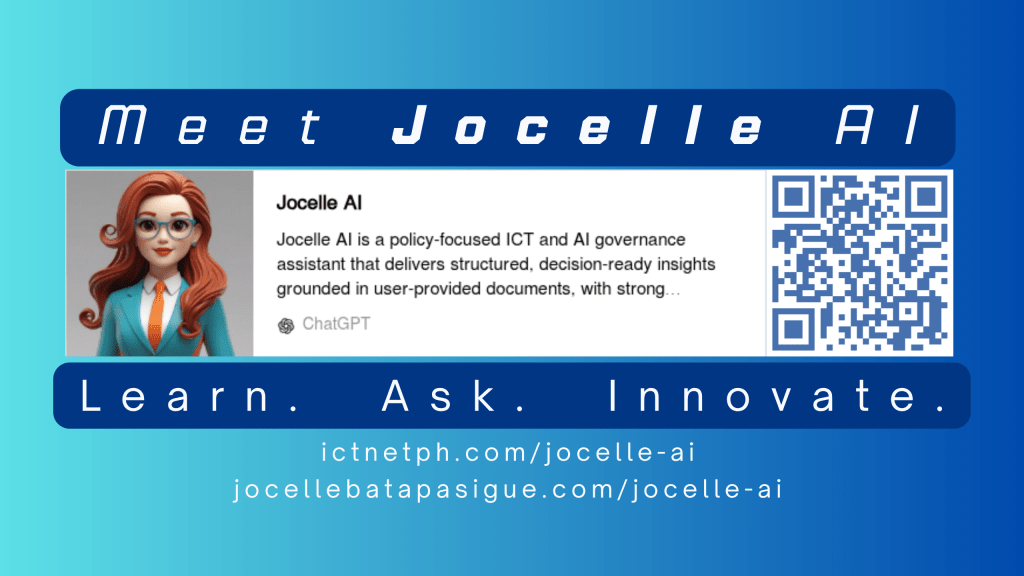

NOTE: The author – Jocelle Batapa-Sigue has trained her own AI – JOCELLE AI – available in OpenAI (Chat GPT).

Jocelle Batapa-Sigue is a Philippine ICT and AI governance professional whose work focuses on the intersection of technology, law, public policy, and institutional innovation. Her writing examines how emerging technologies should be governed in ways that uphold accountability, human rights, and the rule of law. She has completed executive and professional training in AI governance, including the LSE Executive Education programme for the UK Government on advancing AI governance in the Philippines, as well as the International Telecommunication Union (ITU) Academy training course on AI governance in practice: developing secure and innovative frameworks.

Leave a comment